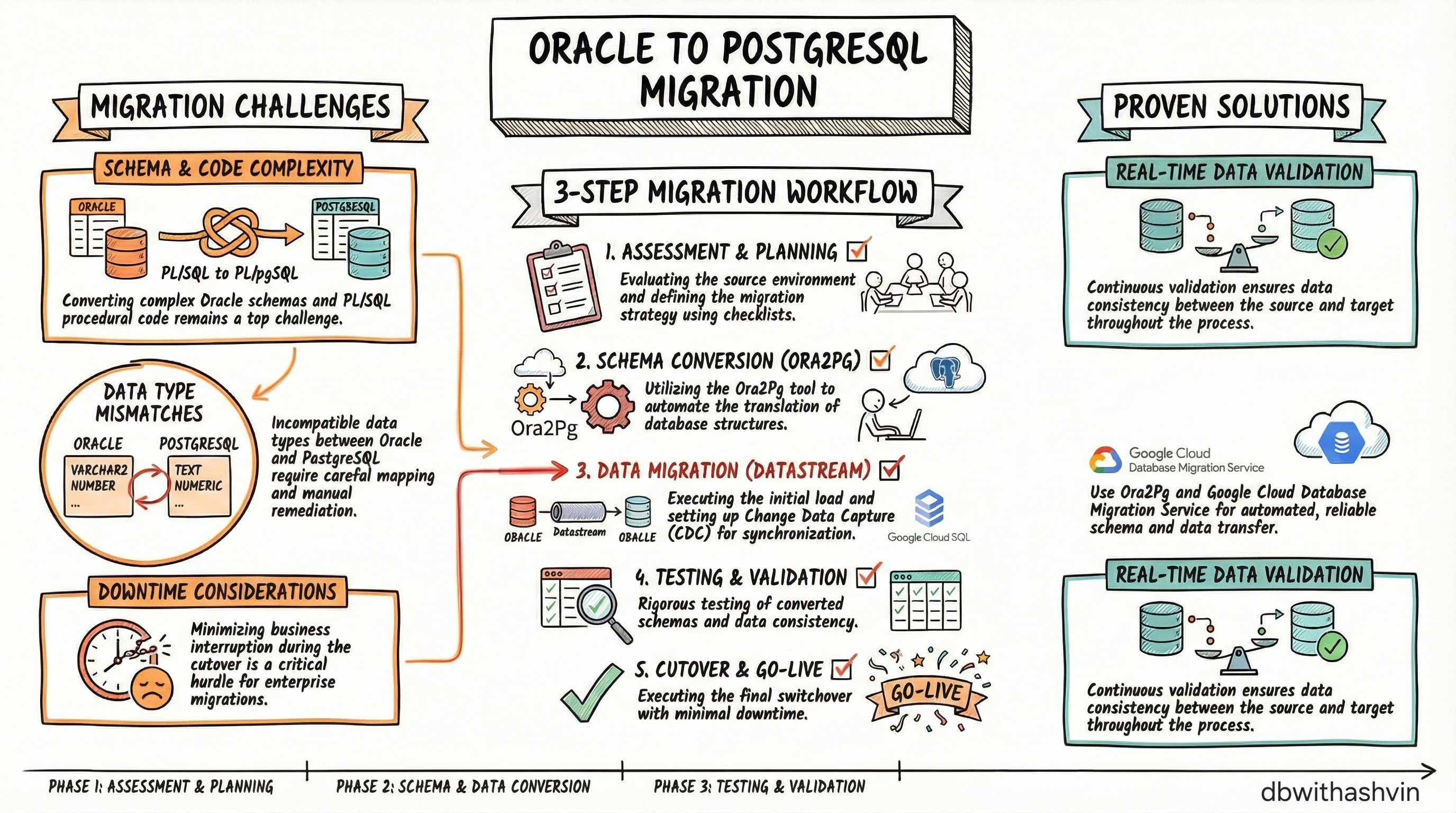

Migrating a mission-critical Oracle environment to PostgreSQL on Cloud SQL is a complex architectural shift that requires more than just moving data. It demands a complete translation of database intelligence — from PL/SQL remediations and schema conversions to real-time Change Data Capture (CDC) pipelines — all with the non-negotiable constraint of zero downtime.

1. Why This Migration Is Hard

Oracle and PostgreSQL are fundamentally different engines in philosophy and architecture. The migration moves you from Oracle's block-level storage and Tablespaces to PostgreSQL's Multi-Version Concurrency Control (MVCC). This isn't just an export-import exercise — it requires rethinking query optimization, procedural logic, and concurrency handling from the ground up.

The strategy is supported by three primary technical pillars:

- Schema Conversion — Structural translation of tables, indexes, and constraints from Oracle's proprietary formats to PostgreSQL-compatible equivalents.

- PL/SQL Remediation — Technical redesign of stored procedures, packages, and triggers to maintain functional parity.

- Change Data Capture (CDC) — Real-time replication to synchronize incremental changes between the initial snapshot and the final cutover, ensuring zero data loss.

2. Critical Technical Challenges

| Challenge Category | Technical Impact |

|---|---|

| Schema Conversion | Requires precise mapping of Oracle-specific objects (Sequences, Synonyms) to PostgreSQL equivalents while maintaining structural integrity. |

| PL/SQL Remediation | Oracle Packages must be decomposed into individual Functions and Procedures organized within PostgreSQL Schemas. Non-portable logic is the most labor-intensive phase. |

| Data Type Mismatches | Differences in numeric precision (NUMBER vs. NUMERIC) and

character handling can cause silent data corruption if not mapped correctly. |

| Storage Model Differences | Moving from Oracle's block-level I/O to PostgreSQL's MVCC requires specific Autovacuum tuning and different approach to bloat management in Cloud SQL. |

3. The Tooling Ecosystem

Selecting the right toolset is the difference between a seamless transition and a cascading service outage. The stack is divided across three responsibilities:

Schema & Code Conversion

- Ora2Pg — The industry-standard open-source tool for automated schema conversion. It

provides comprehensive difficulty scores for each object and handles the bulk of DDL translation

(e.g., automatically converting

SYSDATE→CURRENT_TIMESTAMP,NVL→COALESCE). - Google Cloud Open-Source Toolkit — Identifies proprietary Oracle features lacking direct equivalents, flagging them before they become blockers.

Data Migration & Orchestration

- Google Cloud Database Migration Service (DMS) — The primary orchestration engine managing the migration lifecycle and the critical Promotion event.

- Dataflow (Datastream to PostgreSQL template) — The primary vehicle consuming CDC Avro files from Cloud Storage and executing the corresponding SQL on the Cloud SQL destination with high throughput.

CDC & Real-Time Sync

- Datastream — Serverless CDC integrating directly with Oracle Redo Logs (via

LogMiner) to capture every

INSERT,UPDATE, andDELETEin real-time. Outputs in Avro format. Best for 1:1 replication scenarios. - Striim — Enterprise-grade platform for in-motion data transformation. Preferred when migrations require real-time schema evolution, data masking, or PII scrubbing during flight.

4. PL/SQL Remediation Deep-Dive

Remediating procedural code is the most labor-intensive phase. PostgreSQL does not support Oracle "Packages," meaning business logic must be logically reorganized into PostgreSQL Schemata. The technical sequence for doing this safely:

- Initial Automated Conversion — Run Ora2Pg to convert baseline DDL and simple procedural objects. This handles standard syntax shifts automatically.

- Manual Remediation Identification — Isolate complex procedural logic automated tools miss: Oracle-specific triggers, global variables within packages, and autonomous transactions.

- Enterprise-Grade Tooling — Deploy the Newt Global toolkit for high-complexity stored procedures and triggers that standard tools cannot resolve, significantly reducing manual re-write effort.

5. The CDC Streaming Workflow

Achieving zero downtime requires a two-phase data strategy: an Initial Load (historical state) and a CDC Sync (the real-time delta).

- Source Configuration — Enable Oracle supplemental logging. Datastream connects to Redo Logs to capture incremental changes without significant overhead on production.

- The Streaming Pipeline — Datastream writes change events to Cloud Storage (in Avro format). A Dataflow job using the "Datastream to PostgreSQL" template processes these files and applies the SQL to the Cloud SQL destination.

- In-Motion Transformation (if needed) — If the destination schema is not a direct mirror (e.g., PII masking, data cleansing), deploy Striim to transform data before it hits the destination disk.

6. Validation & Final Cutover Checklist

Before triggering the final switchover, every item below must be validated:

- ✅ Data Validation — Deep checksum-level comparison between Oracle source and Cloud SQL destination to confirm 100% record accuracy.

- ✅ Functional Verification — Unit tests on all remediated PL/SQL objects, validating de-packaged Functions and Procedures return identical results to the Oracle originals.

- ✅ DMS Promotion — Trigger the "Promotion" action in DMS. This stops CDC replication and transforms the destination into a standalone, writable production instance.

- ✅ Final Switchover — Update all application connection strings to point to the new Cloud SQL endpoint and decommission the legacy Oracle instance.